One week serverless with Azure Functions and Slack

Together with some colleagues, I’ve spent this week studying serverless architecture, with focus on Azure Functions. And I can tell you – it was real fun!!

What is it

In my own words…

Serverless architecture doesn’t mean there’s no server anymore. It’s just that as a developer and architect, you don’t have to worry about it anymore. Instead of a complete service implementation (like with ASP.NET), you have to start thinking in functions (aka modules, nanoservices, etc) which you deploy or run in a FaaS environment (function as a service). For triggering such functions, as well as for the communication between them, you get a lot of help from these service environments, and the SDK’s provided for them.

So instead of having to write a lot of code for setting up and implementing rest endpoints, queue writers/listeners, CRUD functions for storage, timers and more, you ‘just’ have to configure your functions, and can completely concentrate on the business logic you have to develop.

But enough theory… let’s look at our real life example.

Example with Azure Functions

Isn’t it always the same problem when you’re learning a new technology… what should I implement for learning? After some playing around and discussion, we came up with this little system:

A Slack application that includes several /slash commands – and each command triggers another (serverless) function in Azure. These commands are

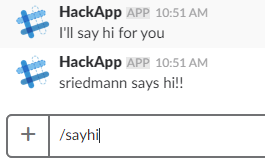

- /sayhi – test function, the App says hi for you in the channel

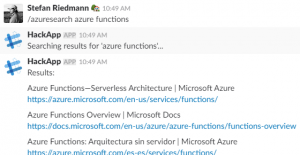

- /azuresearch – to search in Google and have the first 10 results in Slack

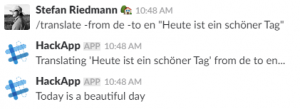

- /translate – to translate a message from one language to another

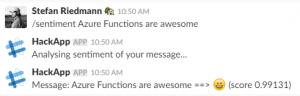

- /sentiment – to analyze the sentiment of a message through Microsoft’s cognitive services

So the system overview looks like that:

So as you can see, there are 5 Azure functions involved:

- Azure Slack Webhook

- Azure Translate Function

- Azure Search Function

- Azure Sentiment Function

- Azure Slack Function

You can find the whole implementation in Github.

The Webhook is called by the Slack application itself (configured inside Slack). The interesting thing is – this is the only part of the system that has to be deployed in the internet, so it has a http endpoint that Slack can reach. All the rest of the system can run locally for dev purposes, or inside a Firewall.

The ‘real’ business functions (equivalents to the slash commands) are triggered via requests using specific queues. Then they send messages to Slack through a common queue. This common queue triggers the last ‘Azure Slack Function’, which sends a http post back to Slack. So the processing of a slash command from Slack happens asynchronously.

It doesn’t matter at all where the business functions are currently running – they just need to be able to communicate with their queues.

Here you can see how the commands and answers in Slack look like:

/sayhi

/translate

/azuresearch

/sentiment

The fun about it

Setting up the cloud

There’s no need for me to repeat what is already well documented by Microsoft. So you can follow this tutorial for example.

But be aware that many or even most of the examples out there go the pure browser way – because you can create, configure and program the whole function entirely in the browser. There, they’re using ‘.csx’ files (C# script). You can download them with the project and open in VisualStudio. But still, that doesn’t seem the best way for me when working on a complex system and more complex function code.

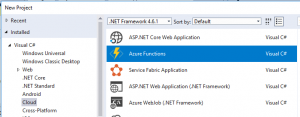

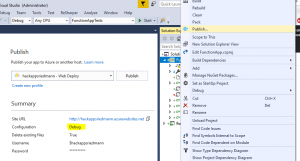

So of course, you create the function app entirely in VisualStudio:

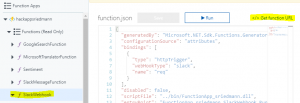

There’s just some attributes you have to add to the code that you (in some cases) don’t need in the browser-scripting-way – because there, you can configure http or queue triggers through the frontend. You’ll see the attributes in the ‘Programming’ section of this post.

I’m planning to write a post about all the different attributes available for functions, and how to write your own attributes.

If working from VisualStudio, there are two main things to consider:

- You have to create a local.settings.json file containing app settings and connection strings. In the cloud, you have to configure the same entries under Application Settings

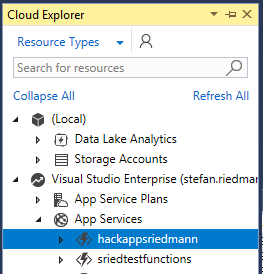

- You should login to Azure through VisualStudio’s Cloud Explorer. This allows you to publish the FunctionApp, watch storage contents and see debug output from the cloud

Setting up the Slack app

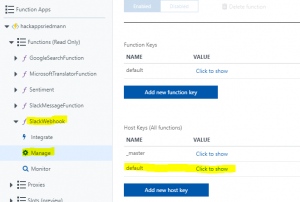

How to create a Slack app is documented here. When setting up slash commands, you assign them all the same endpoint url, which you can get in the Azure portal:

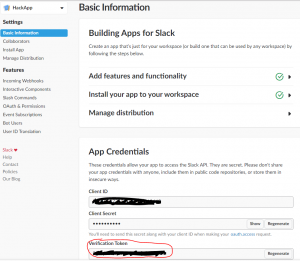

Once you created the app, go to to api page of Slack (https://api.slack.com/apps/XXXX/general):

There you will find the verification token. You’ll need to define this as the ‘default’ Host Key of the Webhook function, because the Azure Functions SDK supports slack commands natively and expects this token there. You’ll have to delete the existing entry and create a new one, so you can copy the token from Slack directly:

Programming

Programming an Azure Function in C# is like writing a console application. You’ve got a static ‘Run’ function which is decorated with Attributes – on the Function itself and optionally for the different input parameters.

So thanks to those attributes, the Azure Function SDK takes care of the integration with rest endpoints, triggers and output queues for example. This makes it especially easy to unit test a function outside a runtime environment – just give it mocked implementations for the different inputs and outputs, and ignore the attributes for testing!

You can see the whole solution in my Github account:

https://github.com/StefanRiedmann/AzureFunctionsForSlack

Debugging

When debugging for the first time locally, VisualStudio automatically installs the Azure Functions CLI. Then everything is like you expect.

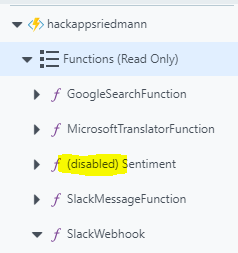

When you’re working with queues as input and/or output, a nice trick is to use a shared Azure storage in the cloud. So the whole system runs in Azure, and you can run the “function-in-work” locally. For that, you have to disable the function in Azure, so it doesn’t steal away the queue messages. This allows you to just run a little piece of the whole system locally, without setting up everything.

There is just one issue still – the ‘Disable’ function in Azure doesn’t work if the code is being deployed as dll (like from VisualStudio or from your build pipeline). In that case, you have to deactivate the functions you don’t want to run in the cloud with the [Disable] attribute, and publish the FunctionApp to Azure again. I hope Microsoft will find a solution for that.

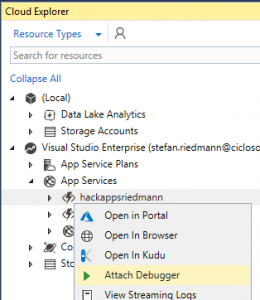

If you don’t want to run the system distributed between cloud and your local machine, but still need the REST endpoints of the cloud, you can also debug completely online. You need to sign in to Azure with the Cloud Explorer of VisualStudio. Just be sure you published the app in ‘Debug’ mode. And be sure you have a good internet connection!

Monitoring

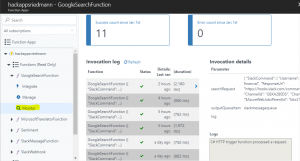

Function log

In the Azure portal, every Function has a ‘Monitor’ page which shows you all the executions and the invocation details. When you use the ‘TraceWriter log’ instance that you get in the function for Debug/Info/Error output, you can see the Logs in there.

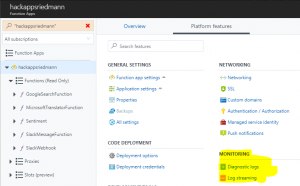

Application log

Don’t forget that an AzureFunction is running within a ‘normal’ WebApp in Azure – you just don’t have to set it up yourself. Still, there are sometimes things happening in the WebApp that never reach a function.

Example: You want to call a function via http request, and something is wrong with the url, authentication or the host key. So the function log itself won’t show you anything. In that case, you can still see the logger for the surrounding WebApp. Just go to

Function App → Platform features → Monitoring

Under ‘Diagnostics logs’, you activate the logging.

Then you can see the log stream in the portal under ‘Log streaming’ or directly in the VisualStudio CloudExplorer (context menu ‘View Streaming Logs’).

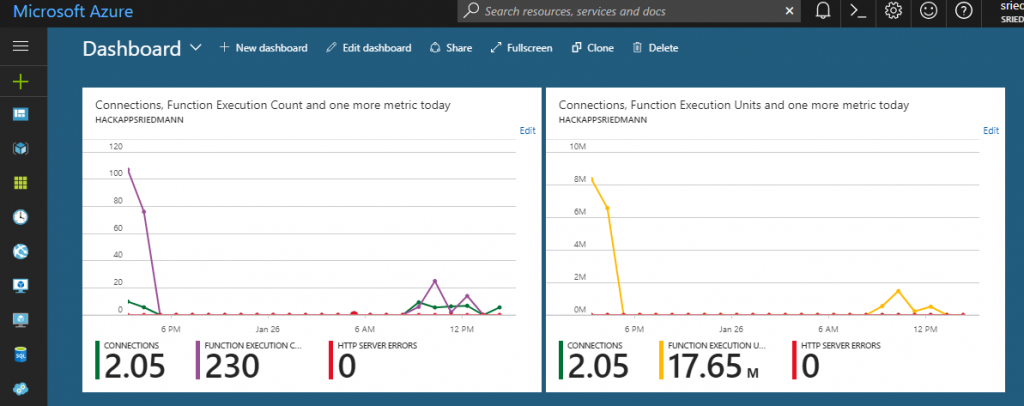

Diagnostics

The Azure Dashboard offers some nice diagnostic graphs to see what’s happening in your FunctionApp – like connections and function execution counts:

Conclusion

The serverless approach helps you to start thinking in a modularised architecture – to see every function separated from the rest of system – just define the input and output of it, and try to write the functions in a test driven way. And if there’s too much business logic inside it – think about splitting it up in more than one function.

Of course you have to maintain kind of a balance between function size and the amount of functions. If a function contains too much logic, its testability and reusability may suffer. If there are too many functions, monitoring the whole system can become overwhelming.

The basic idea of those serverless functions is nothing new – modularisation and usage of interfaces and data contracts are basic techniques in modern systems. But… thanks to the FaaS providers and environments (cloud or on premise), you can facilitate implementation, deployment and monitoring of a system a lot. And the main advantage is, that the FaaS environment can take care of loading/unloading functions, and can dynamically assign them more or less resources. And in the end, this can be very cost effective and allows a system to grow and shrink depending on the usage.